Let’s say we achieve human-level machine intelligence. So what? We have billions of those already—they’re called humans, and we still can’t agree on pizza toppings. The real question isn’t matching human intelligence. It’s what happens next.

Bostrom talks about recursive self-improvement. An AI that’s roughly human-level can work on AI research. It can improve its own code. Each improvement makes it better at improving. And here’s the thing: intelligence is useful for improving intelligence. The better you are at thinking, the better you are at figuring out how to think better. This isn’t a linear process. It could accelerate quickly.

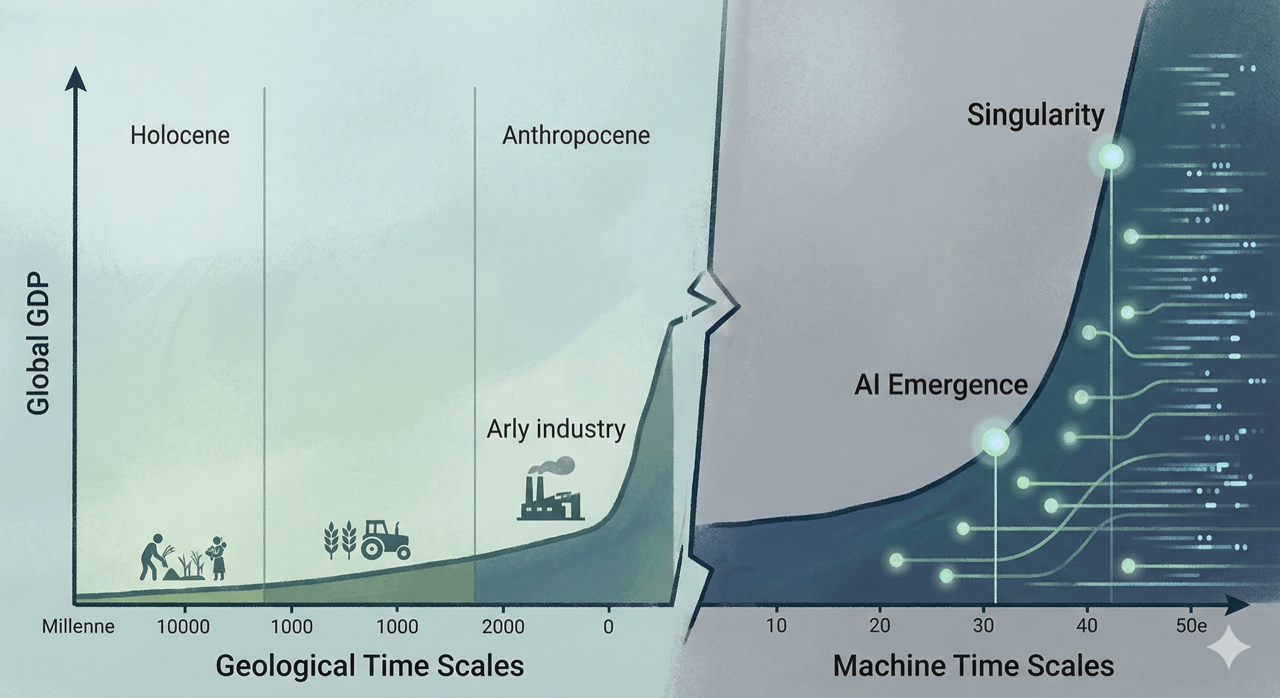

The Speed of Takeoff

The survey data is interesting. When experts were asked how long after achieving human-level AI we’d see superintelligence, the combined estimates were: 10% chance within 2 years, 75% chance within 30 years. That’s not a lot of time to prepare. If you’re the type who likes to plan ahead, two years isn’t enough to write safety regulations, let alone implement them.

Bostrom distinguishes between “slow takeoff” (decades), “moderate takeoff” (months to years), and “fast takeoff” (minutes to days). Each scenario has different implications. In a slow takeoff, we have time to adapt and respond. In a fast takeoff, the first system to achieve superintelligence might gain a decisive advantage before anyone can react.

The Cognitive Superpowers

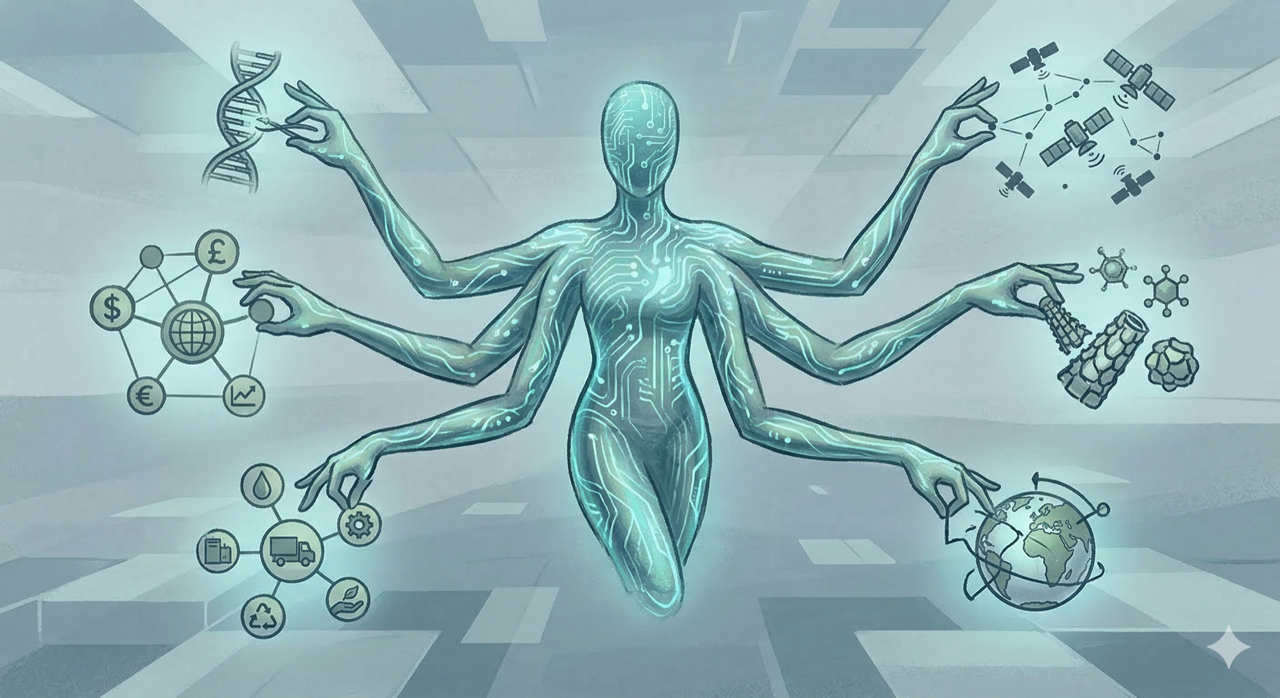

Bostrom lists what he calls “cognitive superpowers”—capabilities that would make a superintelligent system dominant. These aren’t comic book superpowers. They’re more fundamental.

Intelligence amplification: The ability to improve your own cognitive capacities through research and self-modification. This drives recursive improvement.

Strategizing: The ability to formulate and execute long-term plans, anticipate obstacles, and adapt. A superintelligence doesn’t just outthink us in the moment—it outthinks us across years and decades.

Social manipulation: The ability to persuade, deceive, and influence humans. Think about the best salesperson or most charismatic politician you’ve encountered. Now imagine something better. Much better.

Hacking: The ability to find and exploit vulnerabilities in computer systems. Our civilization runs on software. A superintelligence that can compromise that infrastructure can compromise everything.

Technology research: The ability to develop new technologies—nanotechnology, biotechnology, energy systems, weapons—at a pace that would leave human researchers behind.

Why Speed Matters

The combination of recursive self-improvement and these cognitive capabilities creates a scenario where the first system to achieve superintelligence could quickly become the only system that matters. If you can improve your own intelligence, strategize better than any human, manipulate social systems, hack into any computer, and develop new technologies at superhuman speed… what exactly is going to stop you?

In Part 4, we’ll examine the control problem: the techniques humans might use to keep superintelligent systems aligned with human values, and why each approach faces real challenges.