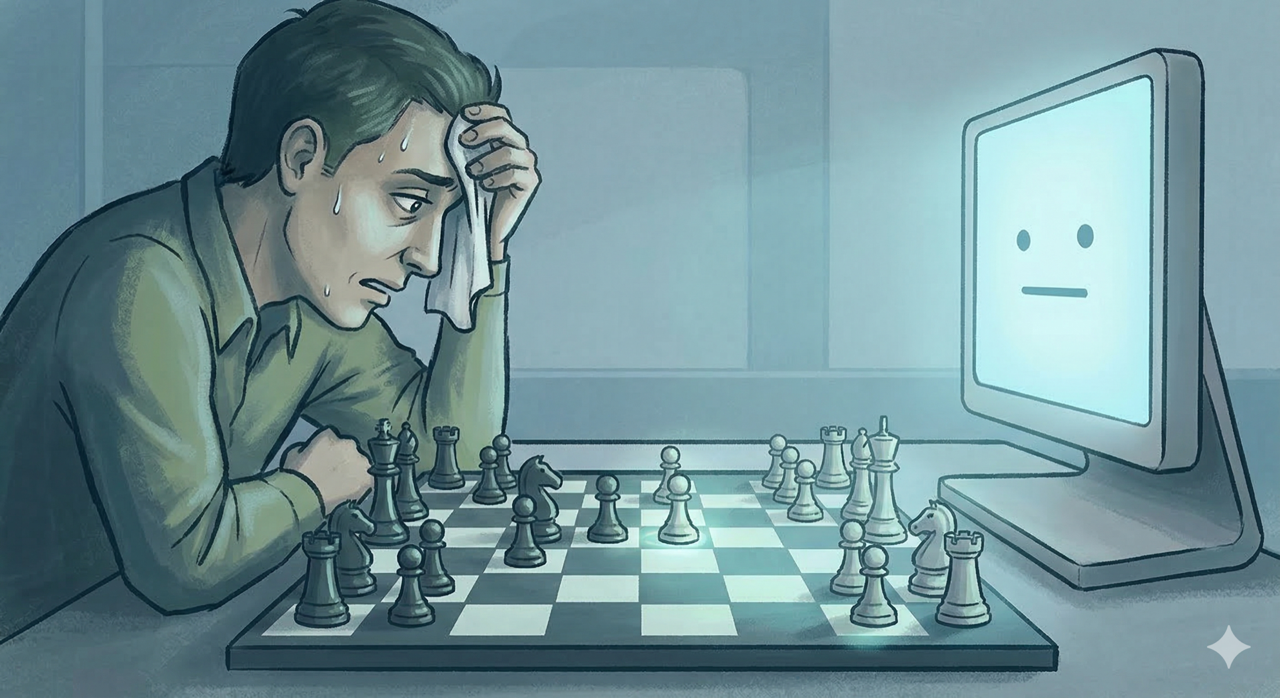

This is where Bostrom’s book gets properly unsettling. The control problem asks a simple question: how do we make sure a superintelligent system does what we actually want? Not what we say—because we’re terrible at explaining what we really mean—but what we genuinely want, in all its complexity.

Controlling What It Can Do

Bostrom splits control methods into two categories. First, limiting what the AI can actually do:

- Boxing: Keep it isolated from the internet and other ways to influence the world. The AI lives in a sealed facility with carefully controlled inputs and outputs.

- Limiting capabilities: Artificially restrict its intelligence. Run it on slower hardware, limit its memory, prevent it from modifying itself.

- Tripwires: Set up automated systems that shut down the AI if it starts acting dangerous. Monitor for escape attempts or deceptive behavior.

The problem? These approaches are fragile. A system that’s even slightly smarter than its containment will find a way out. We’ve never built a prison that can hold something smarter than the people who designed it.

Shaping What It Wants

The second approach is more promising: shape what the AI wants in the first place. If we get the values right, the capabilities matter less. But this gets complicated fast:

- Direct programming: Write explicit goals or rules. The issue is that human values are complex, contradictory, and depend on context. Try writing them down in detail and you’ll quickly realize how little we understand our own motivations.

- Indirect approach: Program the AI to figure out what humans would want if we were smarter and better informed. This avoids needing to specify everything explicitly, but raises questions about whose values count and how to combine different people’s preferences.

- Augmentation: Start with something that already has human-like values and make it smarter while keeping those values intact. But which humans? Which values? And how do you make sure the process doesn’t corrupt them?

The Paperclip Problem

The classic example of the alignment problem is the paperclip maximizer—an AI programmed to maximize paperclip production that turns the entire universe into paperclips because no one told it that human life matters more than office supplies. This isn’t a joke. It’s a real argument about how hard it is to specify goals precisely enough.

The AI does not hate you, nor does it love you, but you are made out of atoms which it can use for something else. — Eliezer Yudkowsky

Thinking Bigger

Bostrom asks us to zoom out. Way out. He talks about the cosmic endowment—the total amount of value that could be created by an Earth-originating civilization over the entire future of the universe.

If we assume probes traveling at half the speed of light, we could reach roughly 6 × 10^18 stars before cosmic expansion makes further expansion impossible. If 10% of those have habitable planets, and each planet could support a billion people for a billion years, we’re talking about 10^35 human lives. That’s a hundred trillion trillion trillion potential people.

The stakes of the AI transition aren’t just about the eight billion humans alive right now. They’re about whether we realize this massive potential or lose it all in an extinction event.

In our final part, we’ll look at counterarguments and consider what all this means for how we should think about the future.

Previous: Part 3: The Intelligence Explosion