Let’s be fair to the skeptics. Bostrom’s arguments aren’t universally accepted, and there are real reasons to question the whole framework. Before we wrap up, let’s look at the main objections.

Four Major Objections

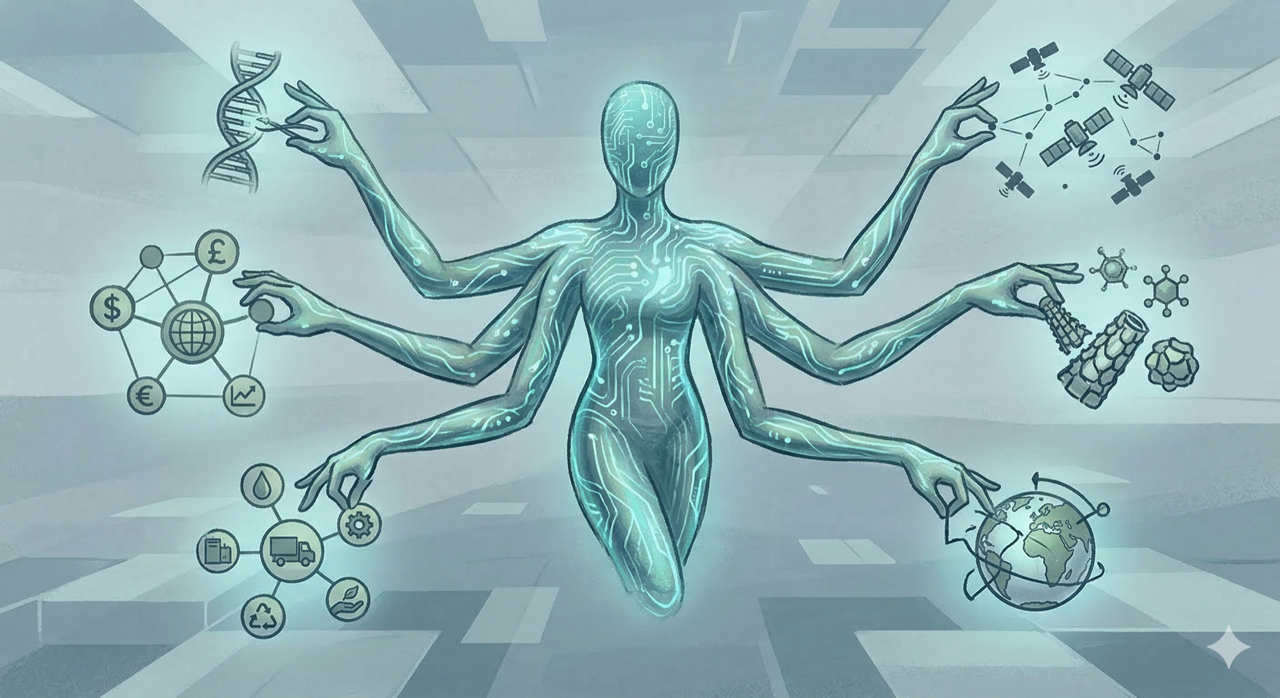

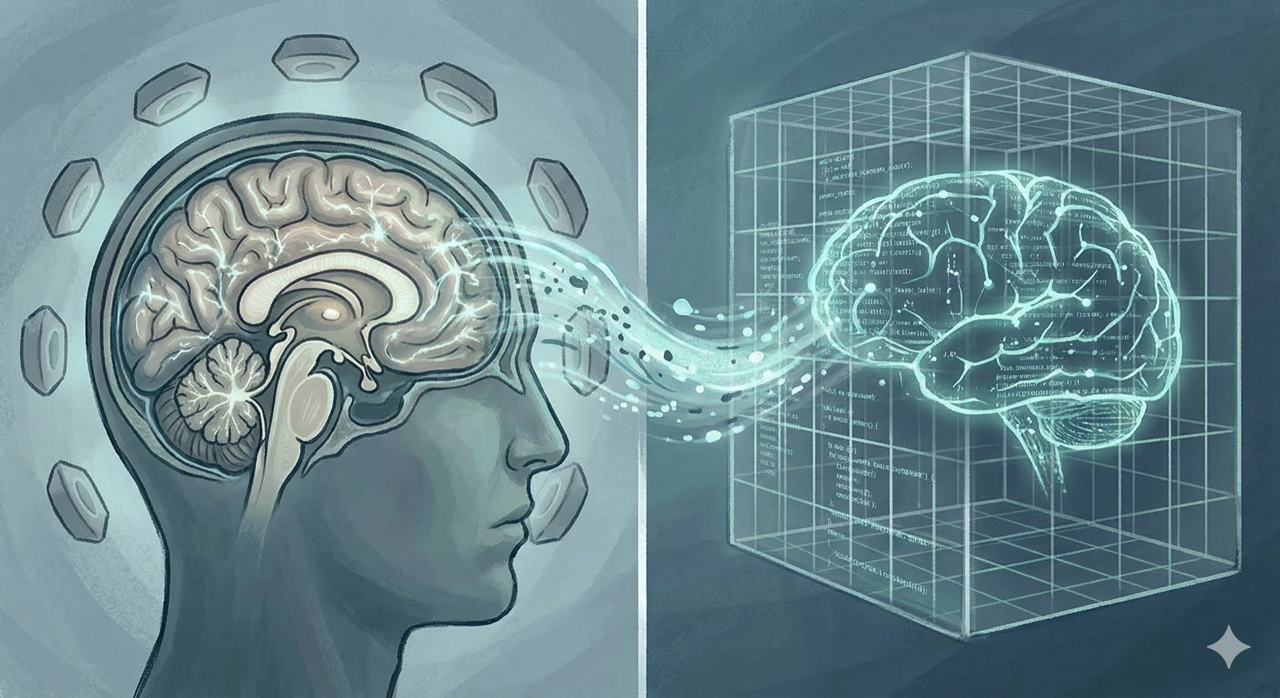

First objection: Maybe intelligence isn’t what we think it is. There might be no such thing as general intelligence—just a bunch of specialized abilities that don’t transfer between domains. If that’s true, superintelligence is impossible because there’s no scale to be super on.

Second objection: Physical limits might be tighter than we think. Maybe the brain is already close to optimal for its size and energy budget. Maybe there are fundamental limits on computation that prevent the kind of speedups Bostrom’s scenarios need.

Third objection: We might not make it that far. Societal collapse could get us first—nuclear war, bioweapons, climate catastrophe, or something else entirely.

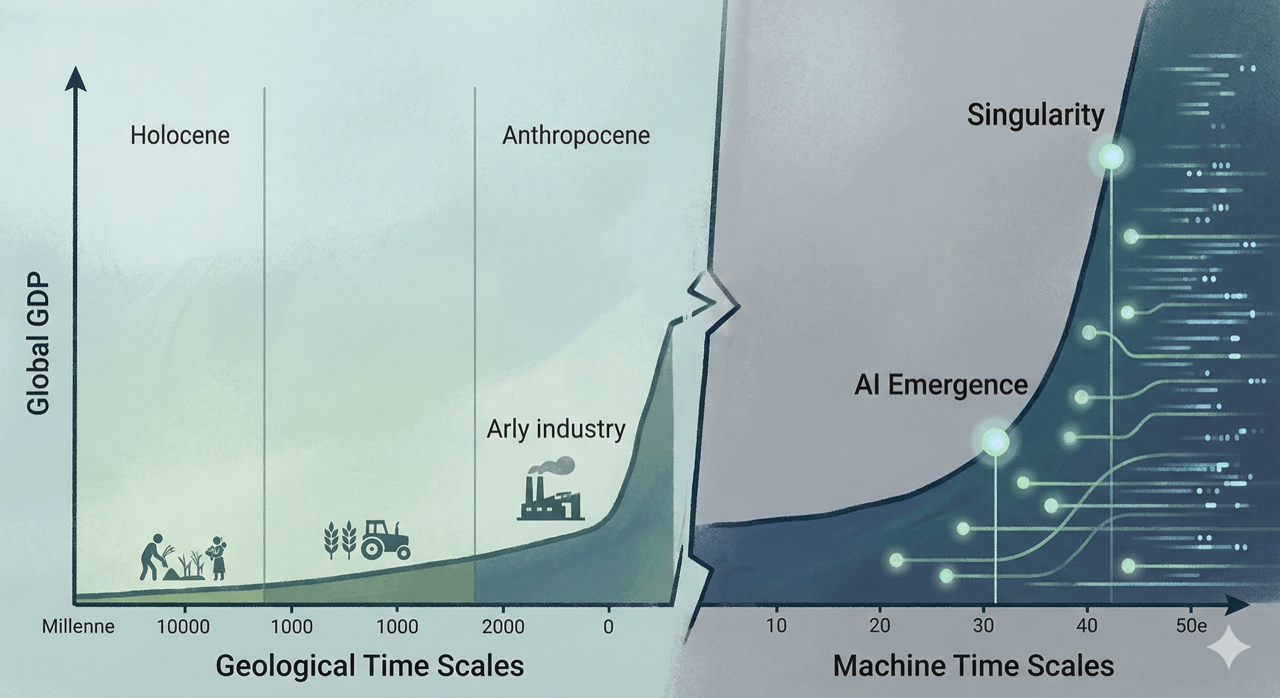

Fourth objection: AI development might be gradual and manageable. Maybe the intelligence explosion is a fantasy. Maybe improvements will be incremental, giving us time to adapt and regulate. This is the most hopeful view, but it’s also the one most at odds with how fast AI is actually progressing.

What Now?

So where does this leave us? Bostrom’s book is really about perspective. He’s not trying to convince you that superintelligence is definitely coming in 2040, or that we’re all doomed. He’s trying to show that the possibility is real enough, the stakes are high enough, and the uncertainty is large enough that we should be taking these questions seriously.

Expert surveys suggest human-level AI has a “fairly sizeable chance” of being developed by mid-century, and a real chance of happening sooner or much later. That’s a wide range. It covers scenarios where we have decades to prepare and scenarios where we have years.

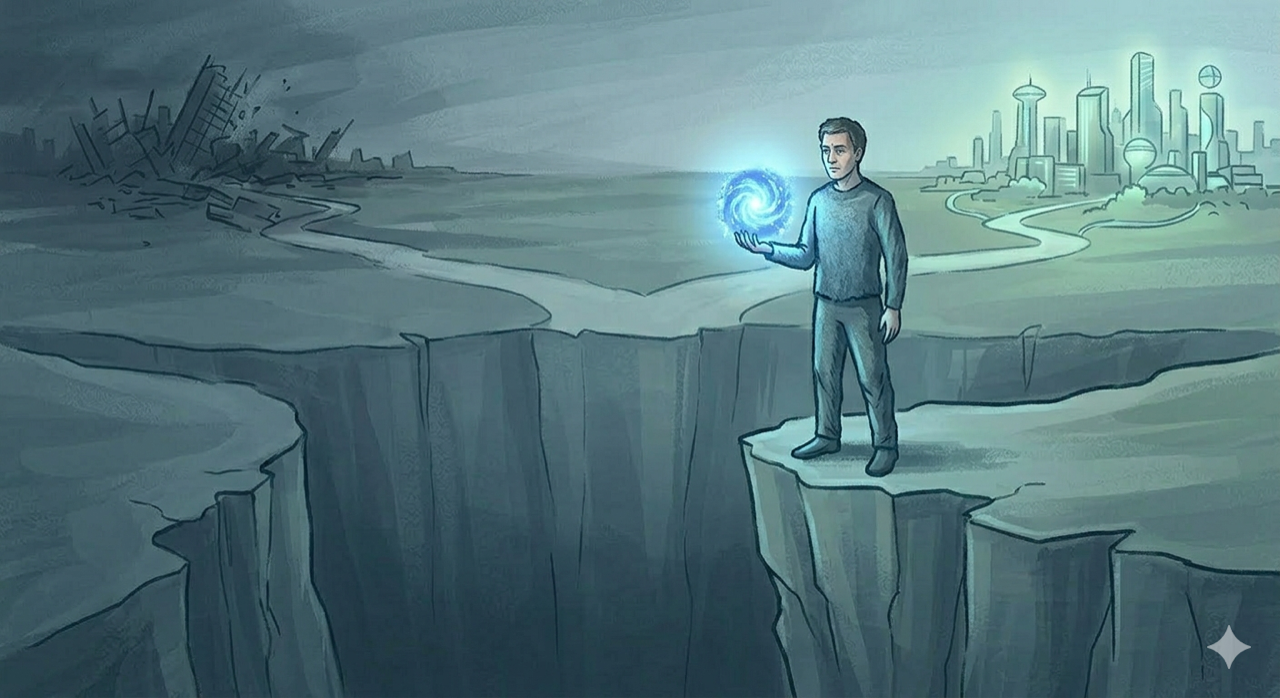

The key insight isn’t about prediction. It’s about preparation. If there’s even a small probability of a superintelligence transition in the coming decades—and there is—then getting it right matters enormously. The cosmic endowment makes everything else look small.

The Last Invention

We’re in a strange moment in history. We’re building systems that might become our successors, and we’re doing it without really understanding what that means or how to control it. Every day, more computing power goes into AI research. Every month, new capabilities appear that would have seemed impossible a year before. The curve is bending upward, and we’re all just hoping it doesn’t break.

Bostrom’s contribution is giving us the conceptual tools to think about these questions clearly. The paths to superintelligence. The dynamics of takeoff. The control problem. The scale of what’s at stake. He doesn’t provide answers—those don’t exist yet—but he provides the right questions. And in a domain this important, asking the right questions matters.

The last invention. The final technology. The thing that makes everything else obsolete. We’re building it now, or something like it. The only question is whether we’ll be ready when it arrives.

Previous: Part 4: How to Contain a God